What Is Duplicate Content?

Duplicate content is identical or highly similar content that appears in more than one place online.

So even if a piece of content isn’t an exact copy of another page, it can still be considered a duplicate if it’s similar enough to that other page.

Here’s what identical and similar content look like:

There can be duplicate content across different webpages on your site. Or across separate websites.

To be considered a duplicate, a piece of content needs to have the following:

- Noticeable overlap in wording, structure, and format with another piece

- Little to no original information

- No added value for the reader compared to a similar page

In this article, we’ll explain how duplicate content impacts SEO and five common causes of duplicate content. And show you how to avoid and solve duplicate content issues.

Let’s start with the SEO impact.

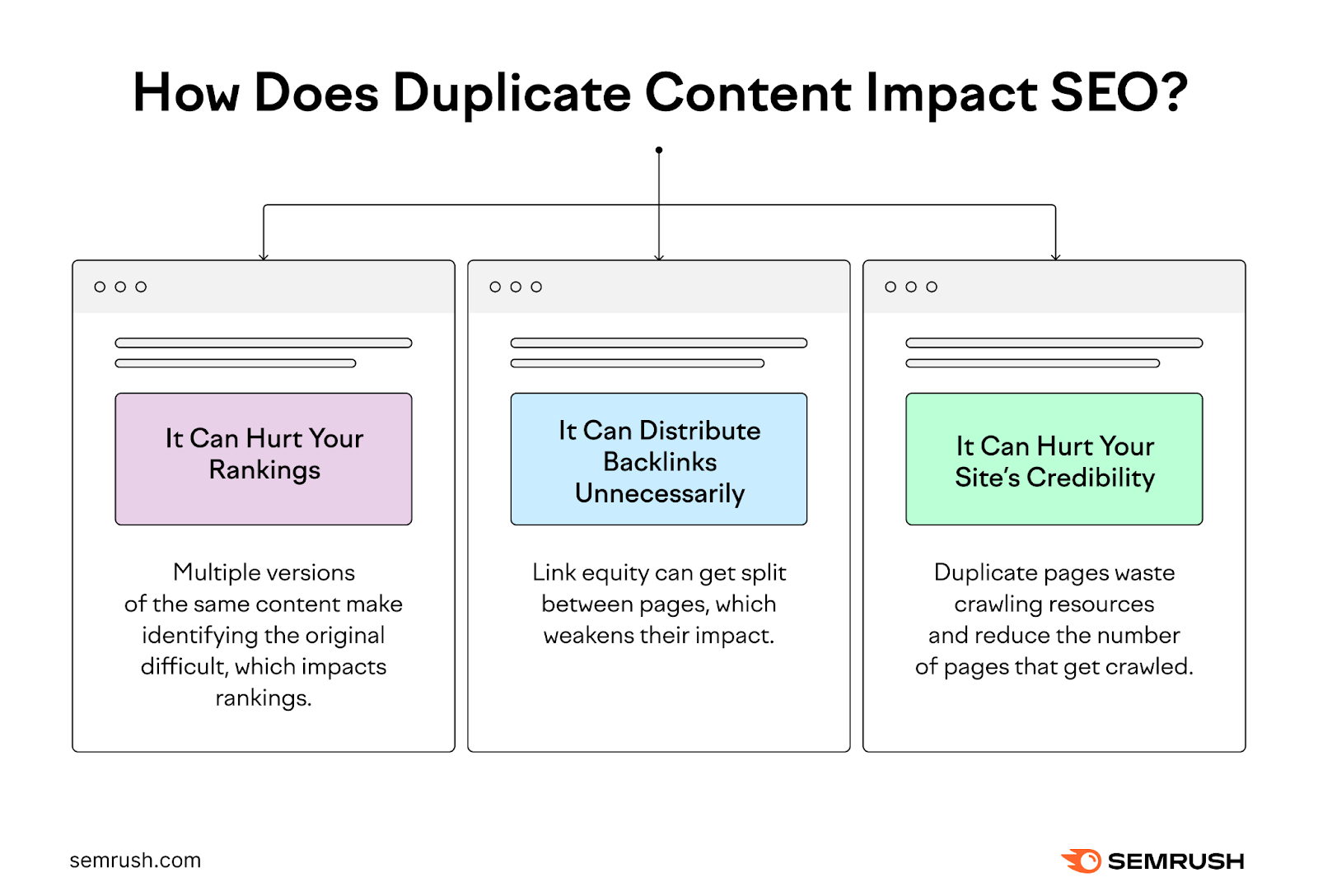

How Does Duplicate Content Impact SEO?

There’s no Google penalty for duplicate content unless it intends to “be deceptive and manipulate search engine results.”

So, why is having duplicate content an issue for SEO? Let’s take a look:

It Can Hurt Your Rankings

Google’s goal is to present searchers with pages that contain original, helpful information. Not pages that simply rehash content already found elsewhere (including content within your own website).

Which is why they have search ranking systems designed to prioritize original content when ranking results.

So, if you have multiple pages that look alike, Google will do its best to identify which page is the original.

But if it can’t identify the original, your rankings could suffer. And the page might not rank at all.

And if your content does rank, the version that Google chooses might not be the version that you want to appear in search engine results pages (SERPs).

It Can Distribute Backlinks Unnecessarily

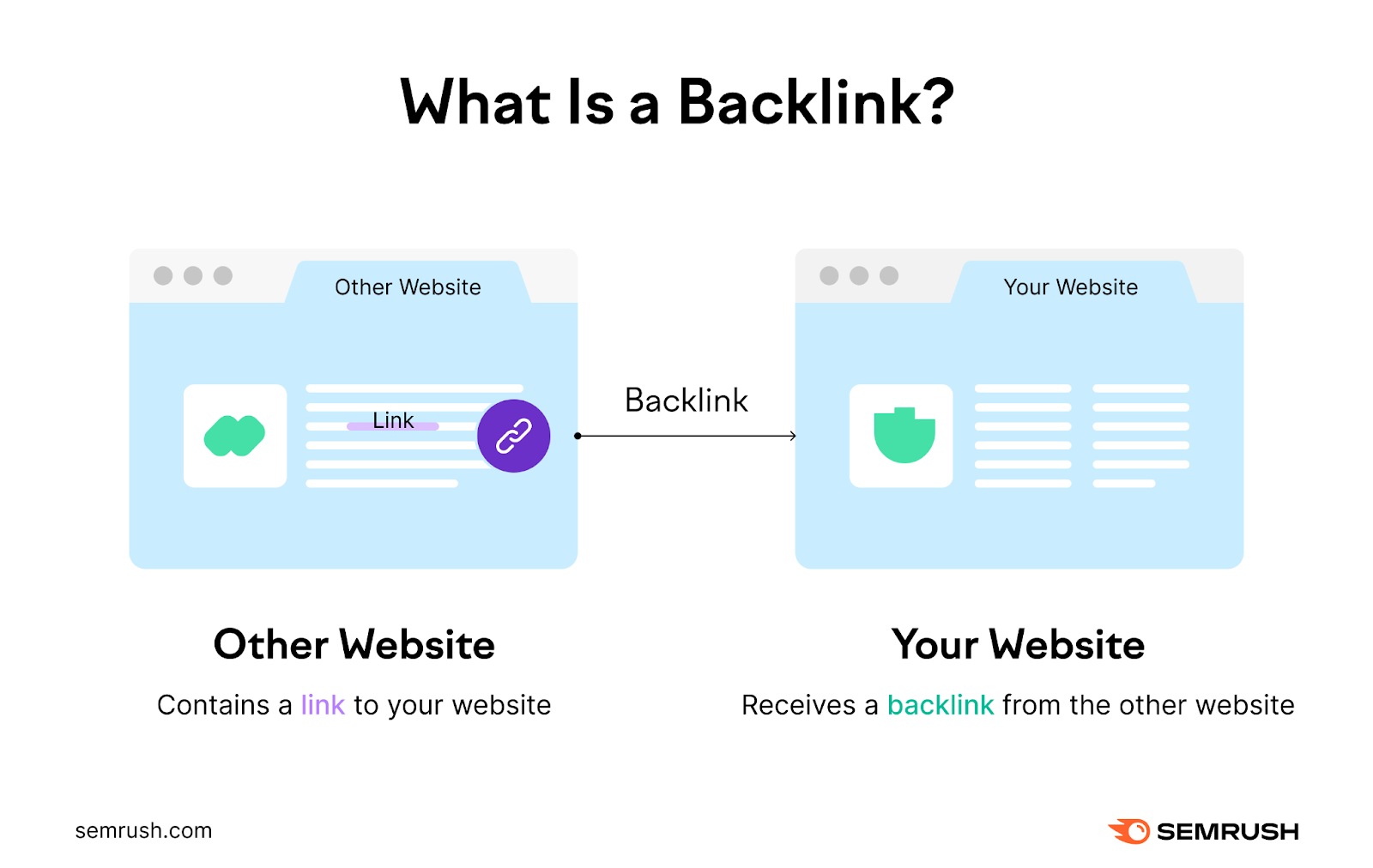

Backlinks are links on other websites that point to your site.

Each backlink is like a vote of confidence from that other website. Which tells Google that your content is probably accurate and helpful.

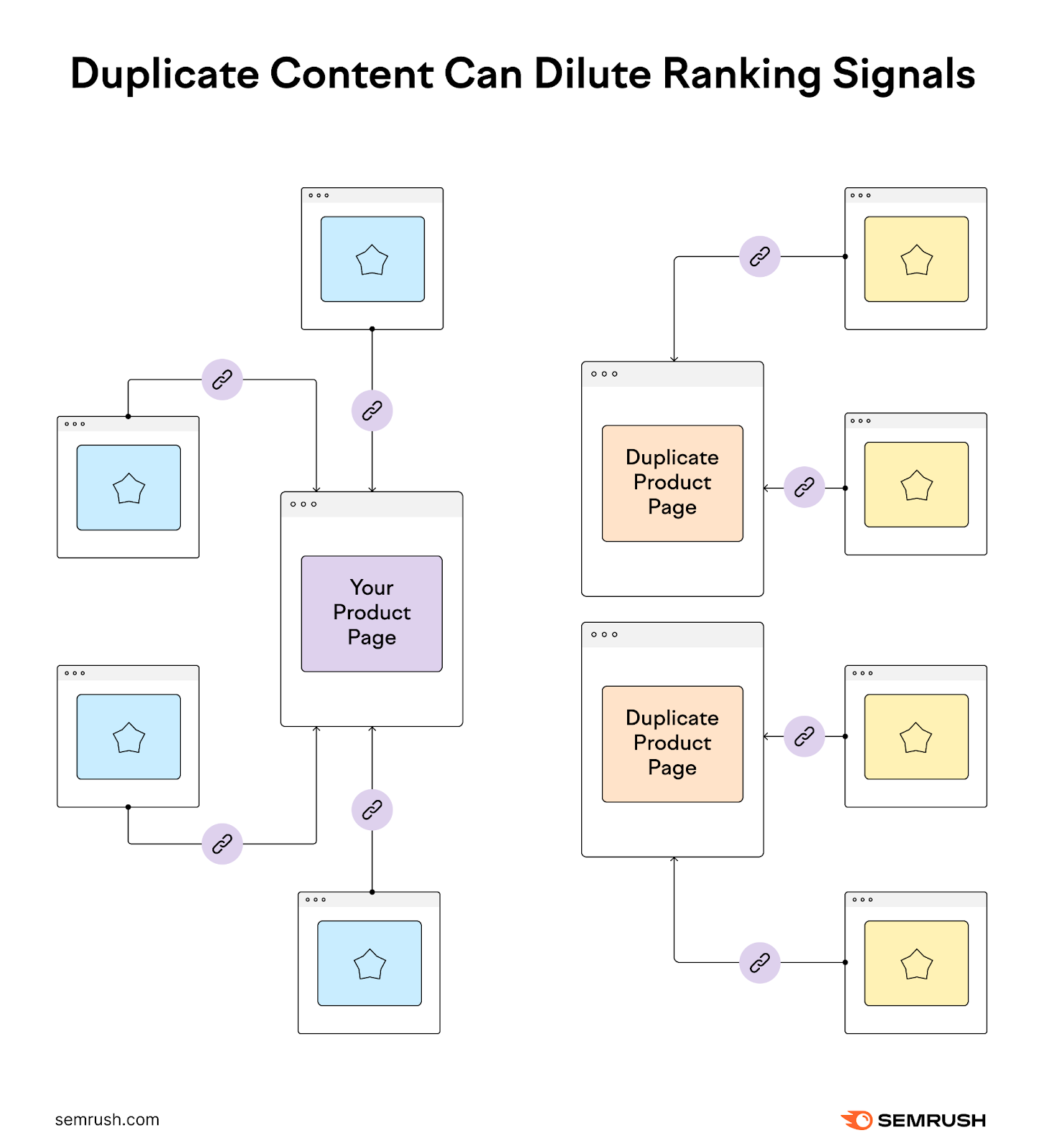

Having two or more versions of a single piece of content can dilute link equity—the reputation and authority that gets passed from one page to another through a backlink.

Here’s why.

Let’s say you have two identical pages with the following URLs:

- https://www.gardeningwebsite.com/gardening/planting-flowers

- https://www.gardeningwebsite.com/flowers/planting-flowers

So if you have 50 backlinks between those two pages, 30 of those might go to the first URL while the remaining 20 link to the second one.

Instead of having one page strengthened with 50 backlinks, you get two pages with fewer backlinks each.

This distribution can potentially lead to lower search engine rankings since neither page gains as much authority as a single page would.

It Can Hurt Your Site’s Crawlability

Search engines like Google need to crawl and index (i.e., find and store) your content for it to show up in search results.

Duplicate pages waste your crawl budget (the amount of time and resources search engine crawlers devote to crawling your site before moving on). Because crawlers can end up reviewing multiple versions of the same content.

This reduces the number of pages that can get crawled. Which can impact your site’s visibility in search results.

Further reading: Crawlability & Indexability: What They Are & How They Affect SEO

5 Common Causes Behind Accidental Duplicate Content

There are many reasons why content can get accidentally duplicated, mainly involving website structural issues like URL variations and copied content.

Here are five common causes:

1. Improperly Managing WWW and Non-WWW Variations

Users can often access websites through both a URL including “www” at the beginning and a URL without it.

If your site is accessible both ways and you don’t manage these variations properly, it can lead to duplicate content issues.

Imagine your website is a house with multiple entrances. Some people might enter your house through the front door using “www.example.com.” And others may enter through the back door using “example.com.”

Even though it’s the same house, the URL variations can make it look like two separate ones to search engines.

2. Granting Access with Both HTTP and HTTPS

Having your website be accessible through both HTTP and HTTPS protocols can also lead to duplicate content.

This is like having a regular door with the URL “http://example.com” for some visitors. And a super-secure, locked door with the URL “https://example.com” for others.

Search bots see these as doors to different houses if you don’t tell them which door is the main entrance.

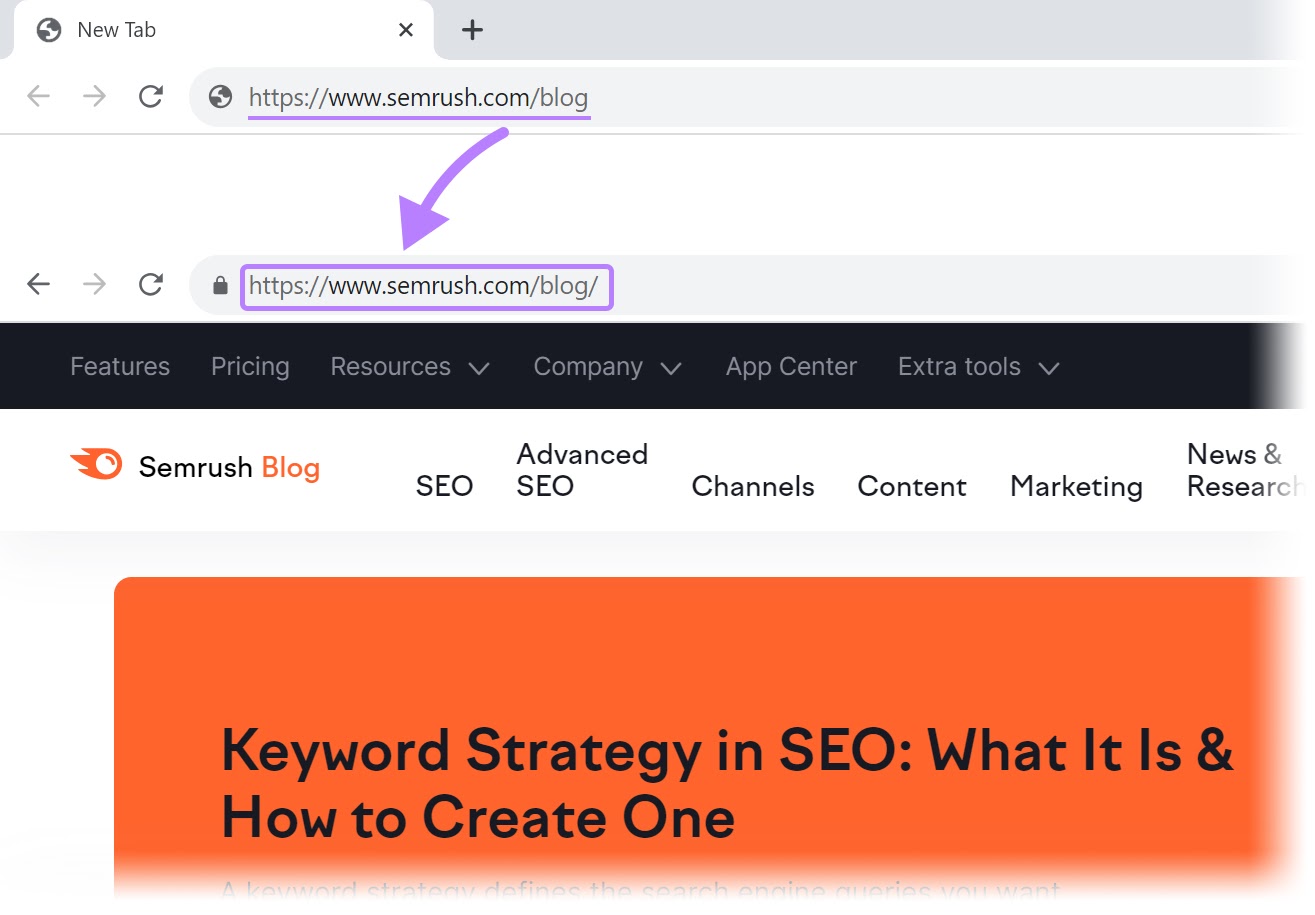

3. Using Both Trailing Slashes and Non-Trailing Slashes

Google sees variants of a URL with and without a trailing slash (“/”) as duplicate content.

For example, the following two URLs would be considered unique to search engines:

- www.example.com/page/

- www.example.com/page

To avoid this duplication, pick an approach to trailing slashes on your page URLs and stick to it. (More on how to use 301 redirects to fix this issue soon.)

We’ve done this on our own blog.

So, if you enter “https://www.semrush.com/blog” into your browser, you’ll immediately be redirected to “https://www.semrush.com/blog/”

4. Including Scraped or Copied Content

Content scraping happens when someone copies content from a website and publishes it on another site without permission or giving proper attribution.

But Google is generally pretty good at distinguishing between the original source and the copied content. They’ve previously written about how they treat scraped content, saying:

You shouldn’t be very concerned about seeing negative effects of your site’s presence on Google if you notice someone scraping your content.

5. Having Separate Mobile and Desktop Versions

One way you can structure your site to make it mobile-friendly is to use separate URLs for desktop and mobile versions.

For example, you might use “example.com” for desktop users. And “m.example.com” for mobile users.

This approach lets you tailor the content and design specifically for mobile devices, to ensure a more user-friendly experience.

But if not implemented correctly, using separate URLs for mobile and desktop versions can lead to duplicate content issues.

How to Find Duplicate Content

The first step to addressing duplicate content in SEO is to find out where it’s happening on your site (if at all).

Here are two ways to do that:

Audit Your Site to Identify Duplicate Content

Checking your site for duplicate content on a regular basis helps you fix problems early on.

You can comb through your pages manually if your site is small enough. But that’s inefficient. And you might miss some pages

So, we suggest running your site through Semrush’s Site Audit tool.

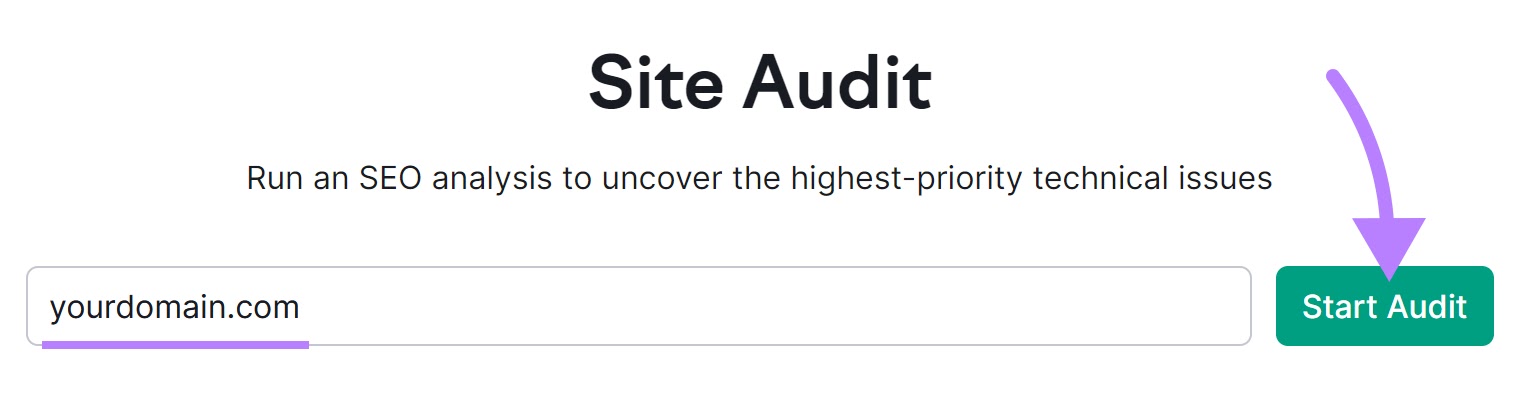

To get started, open the tool, enter your URL in the search bar, and click “Start Audit.”

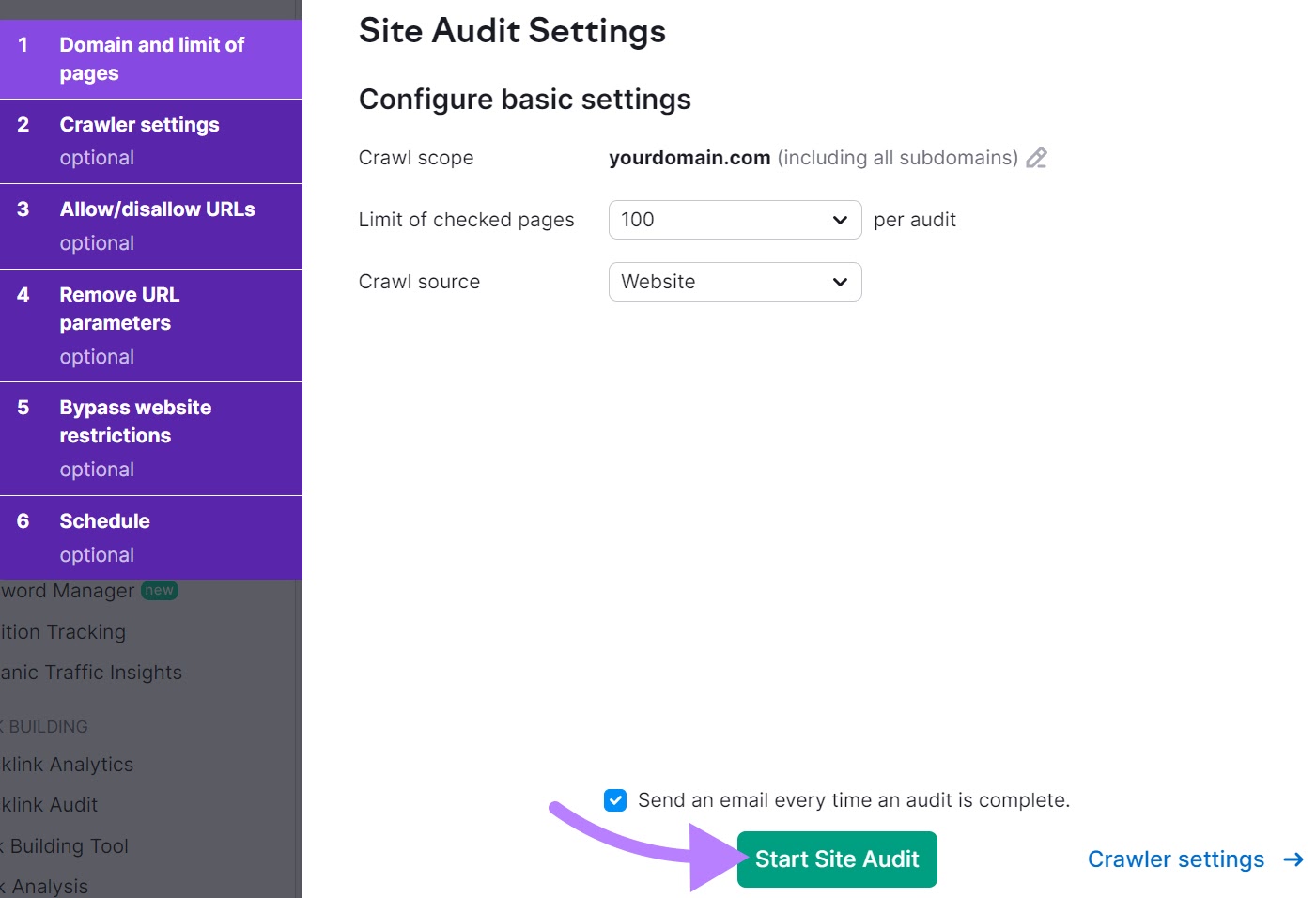

Next, you’ll be asked to configure the basic settings of the crawl. This includes setting a limit for checked pages and an auditing frequency. You can follow this step-by-step guide to configuring your audit to get through the settings.

When you’re ready, click on “Start Site Audit.”

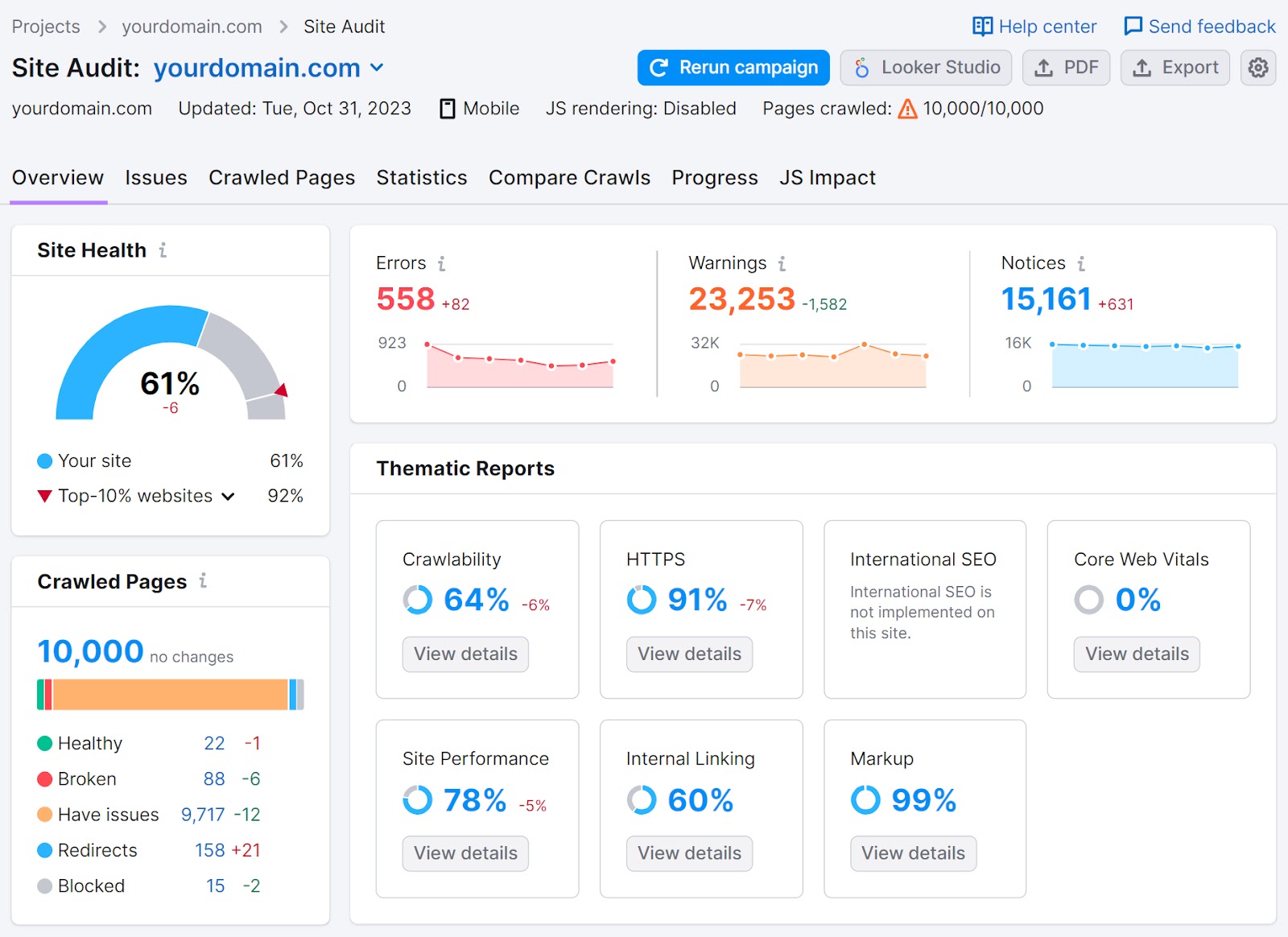

When your results are ready, you’ll see a dashboard similar to this one:

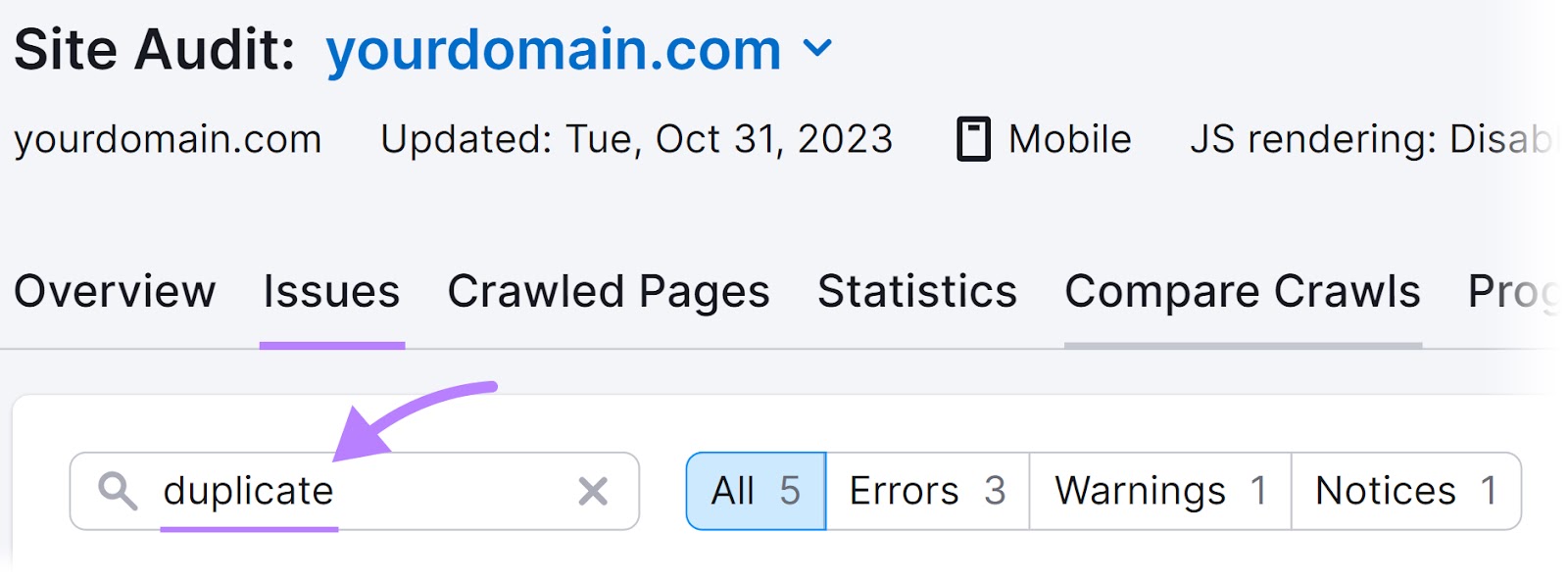

Click on the “Issues” tab to see a complete list of technical issues and the number of pages they affect.

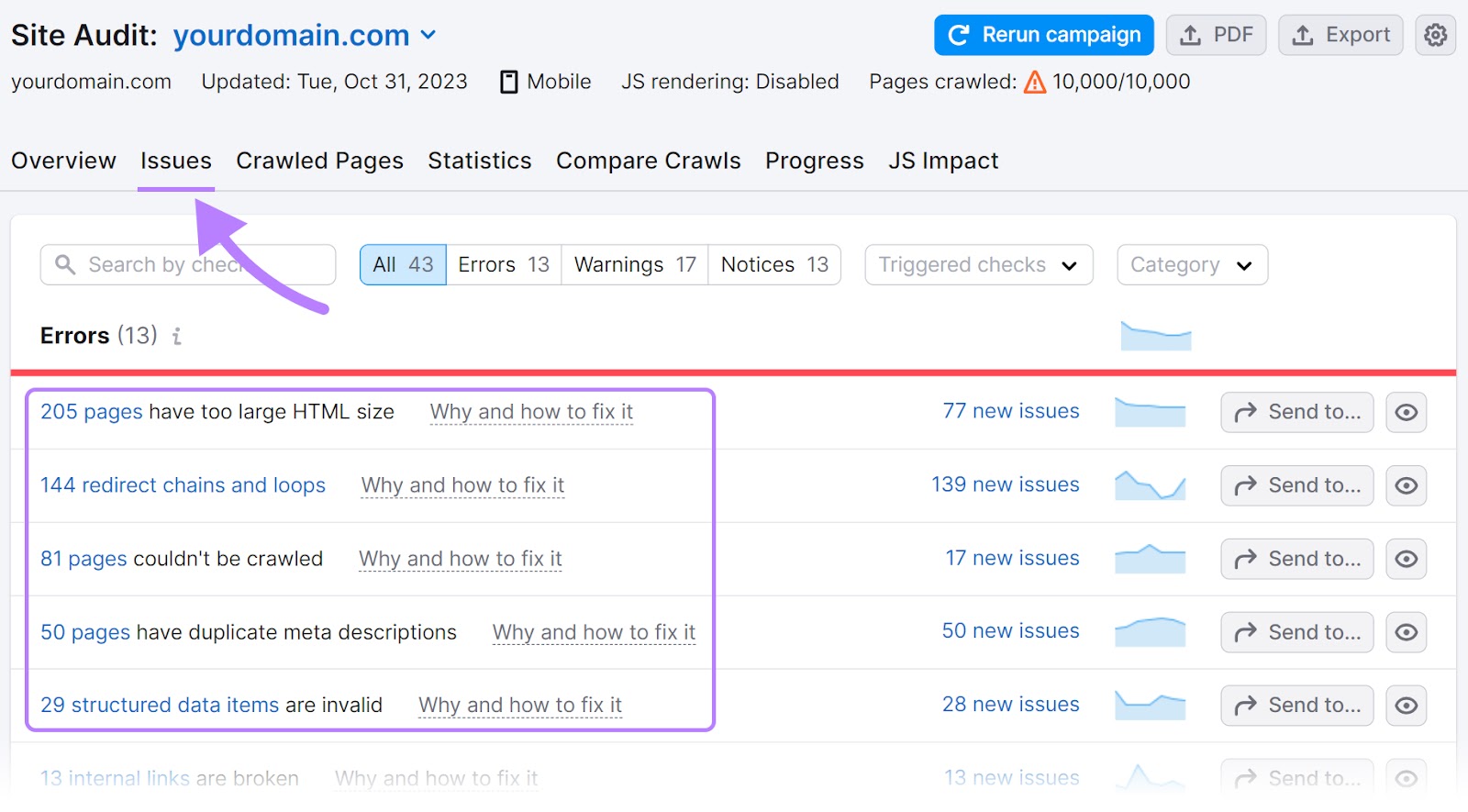

Then, enter “duplicate” in the search bar above the list of technical issues.

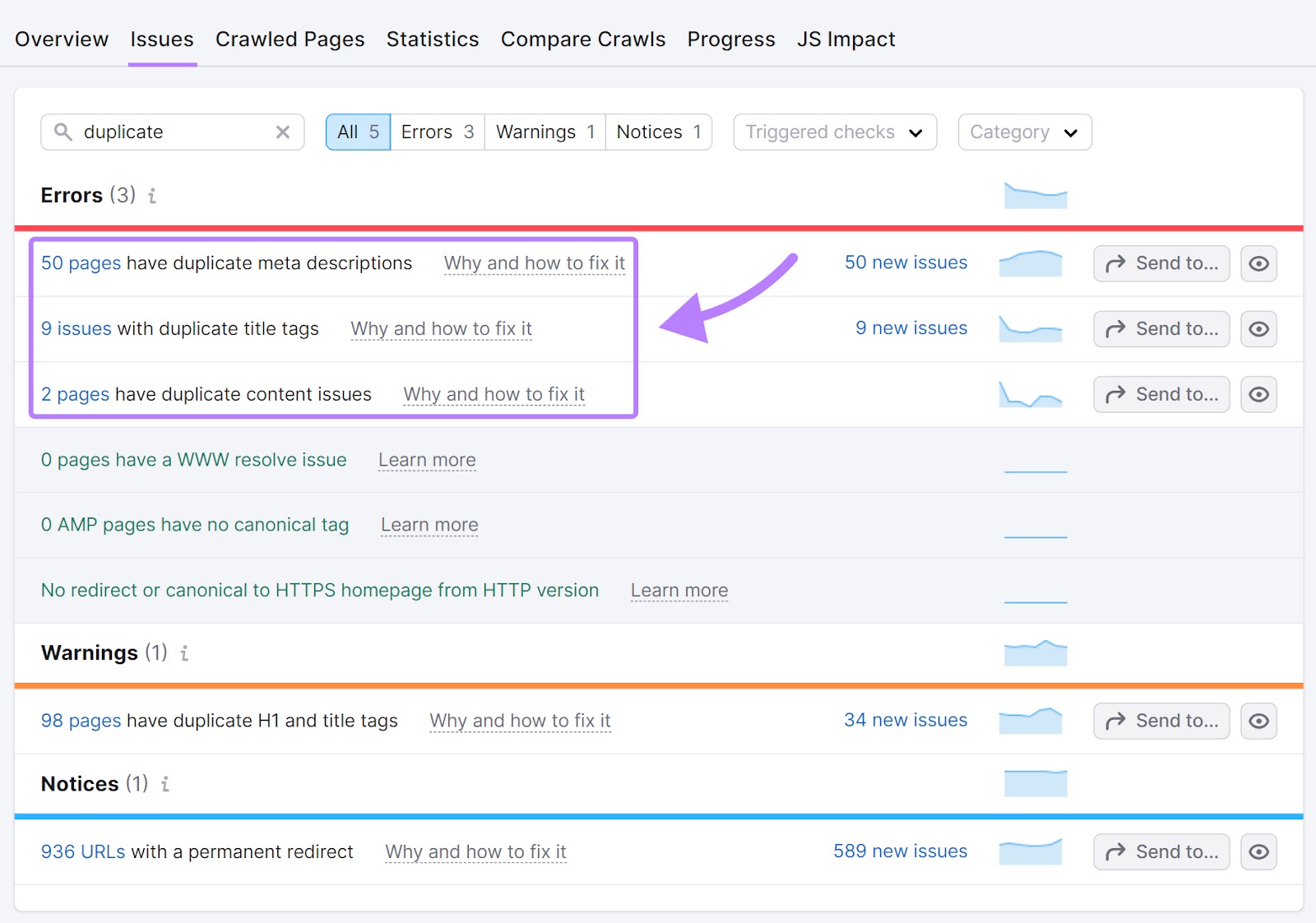

Site Audit flags pages as duplicate content if their content is at least 85% identical. It also flags duplicate titles and meta descriptions.

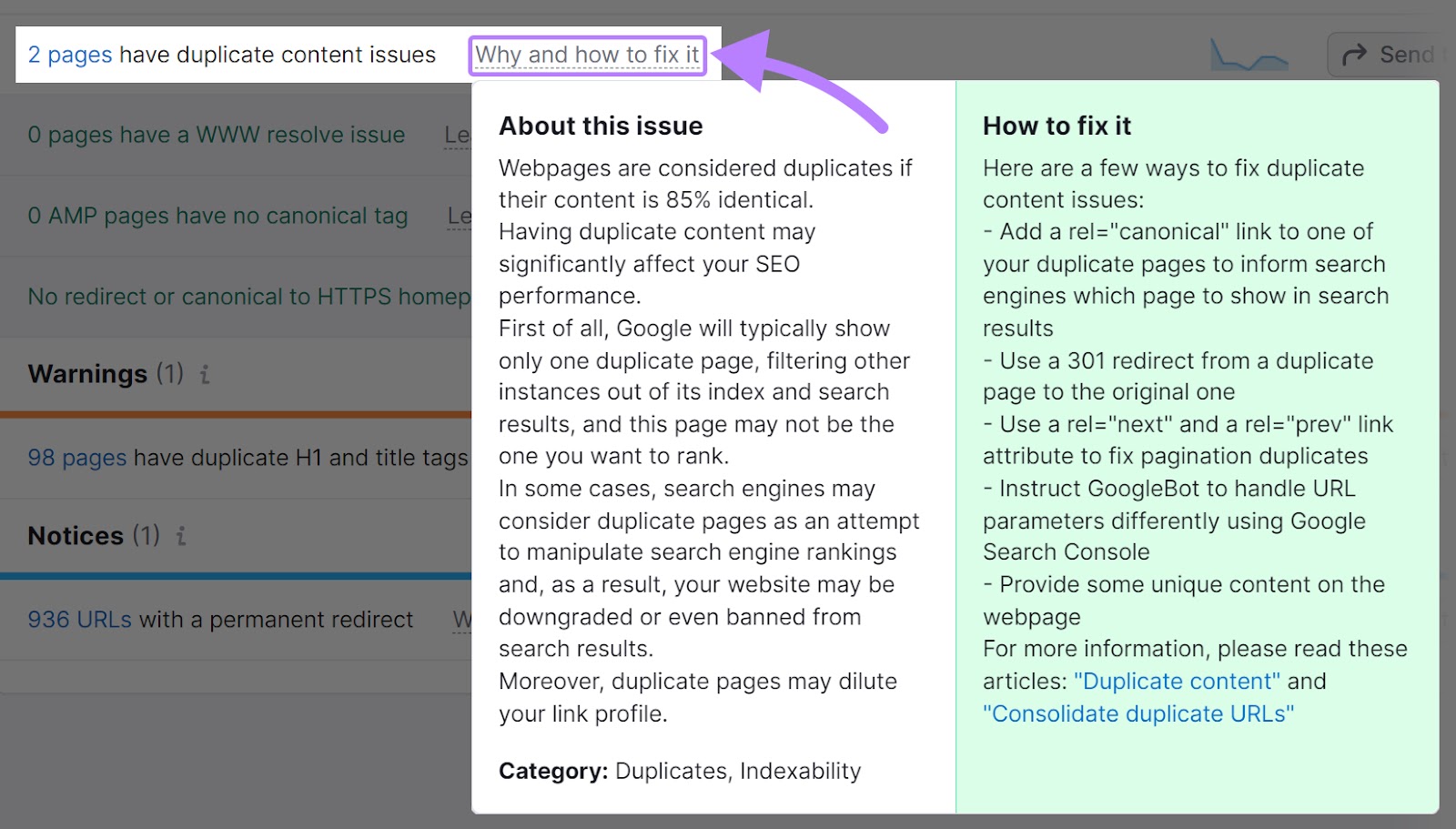

If your domain has any duplicate pages, you’ll see a “Why and how to fix it” link in the same line.

Click on it to see a pop-up with more information on the given issue and how you can fix it.

Monitor Indexed Pages in Google Search Console

Google Search Console (GSC) is a free tool you can use to see whether all your pages are indexed. And which ones aren’t.

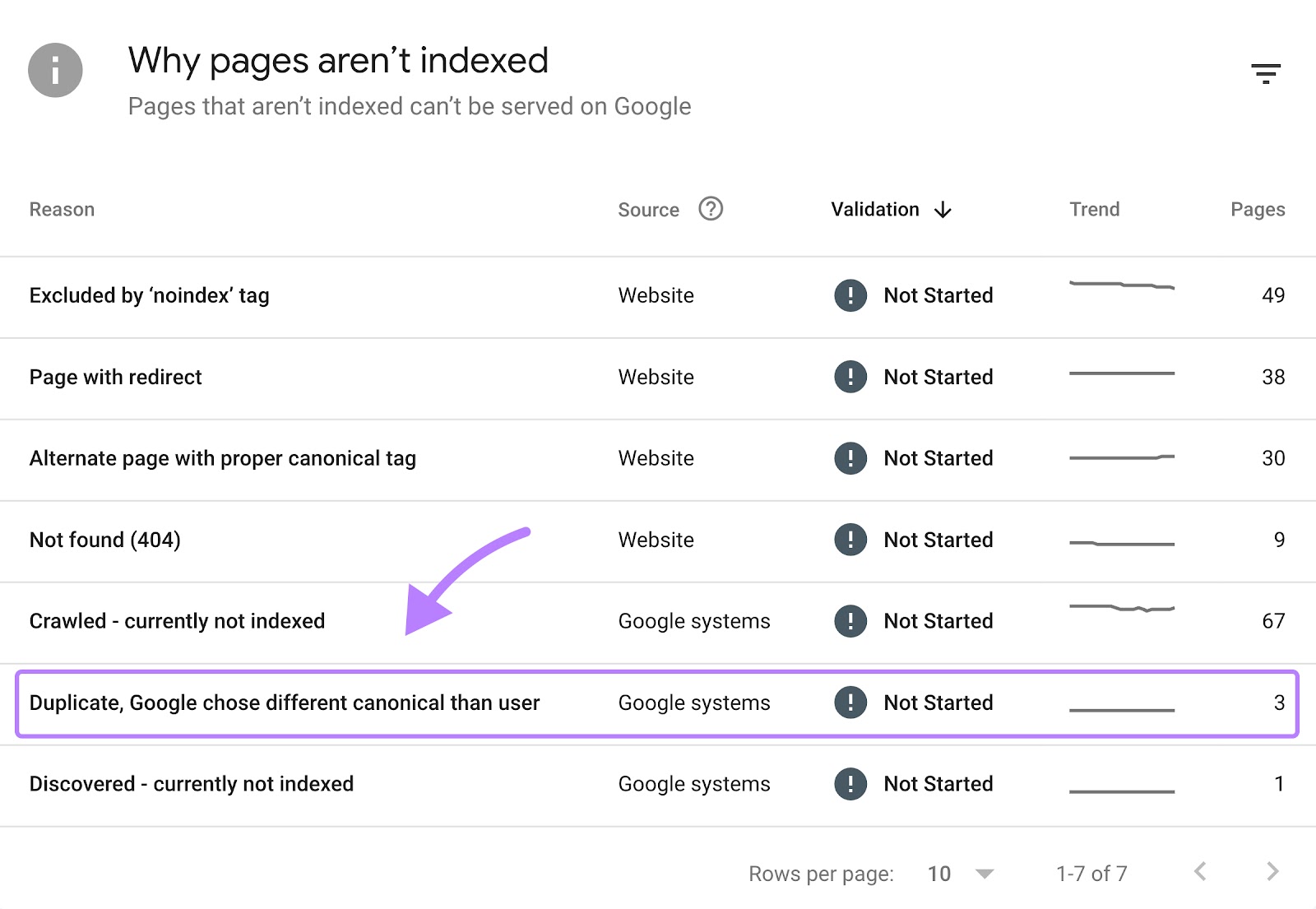

The tool also tells you why pages aren’t indexed. And one of those reasons is duplicate content.

To get started, set up GSC. If you’re not sure how, check out Semrush’s guide to Google Search Console for a step-by-step walkthrough.

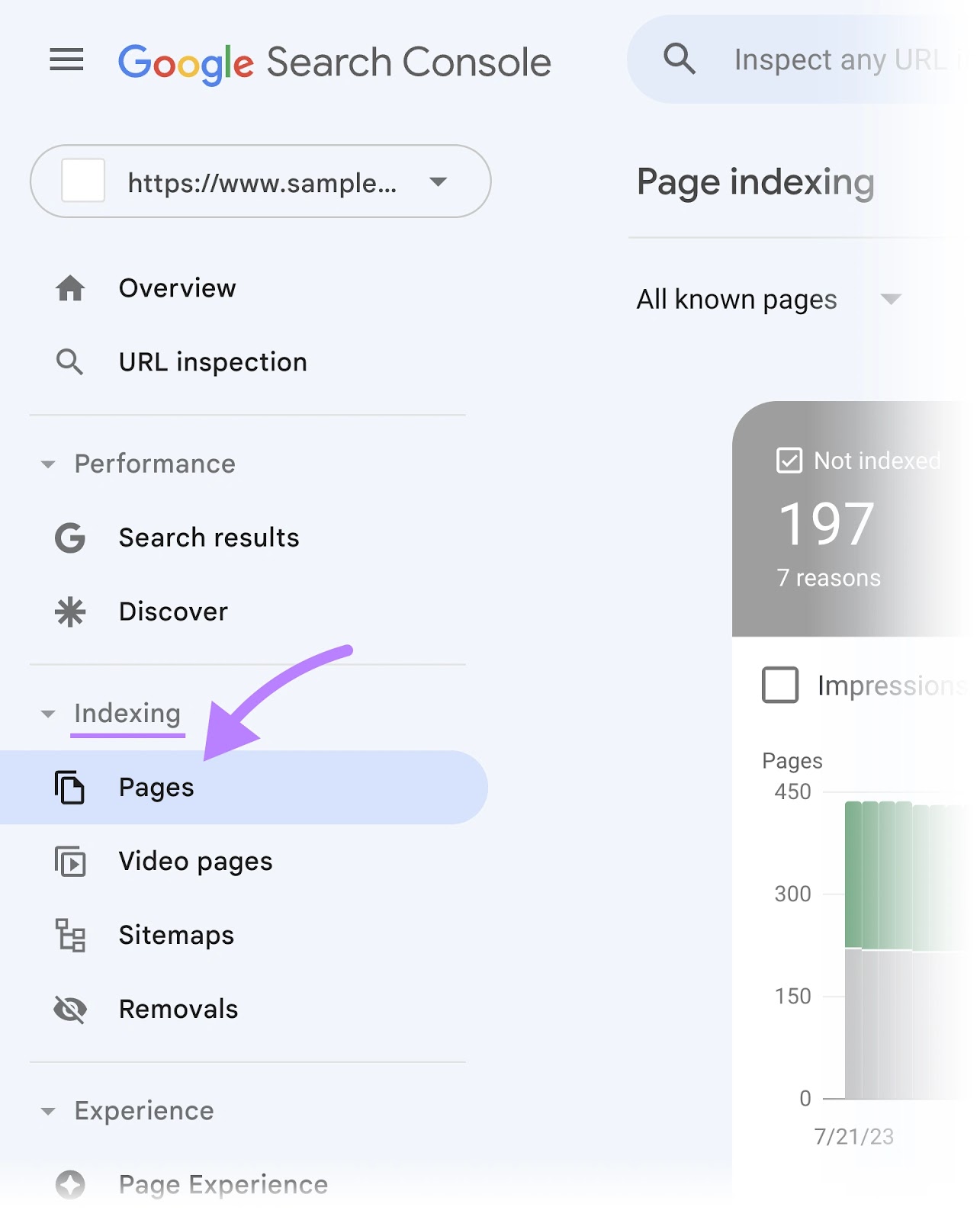

Then, click on the “Pages” tab under the “Indexing” section in the left-hand menu.

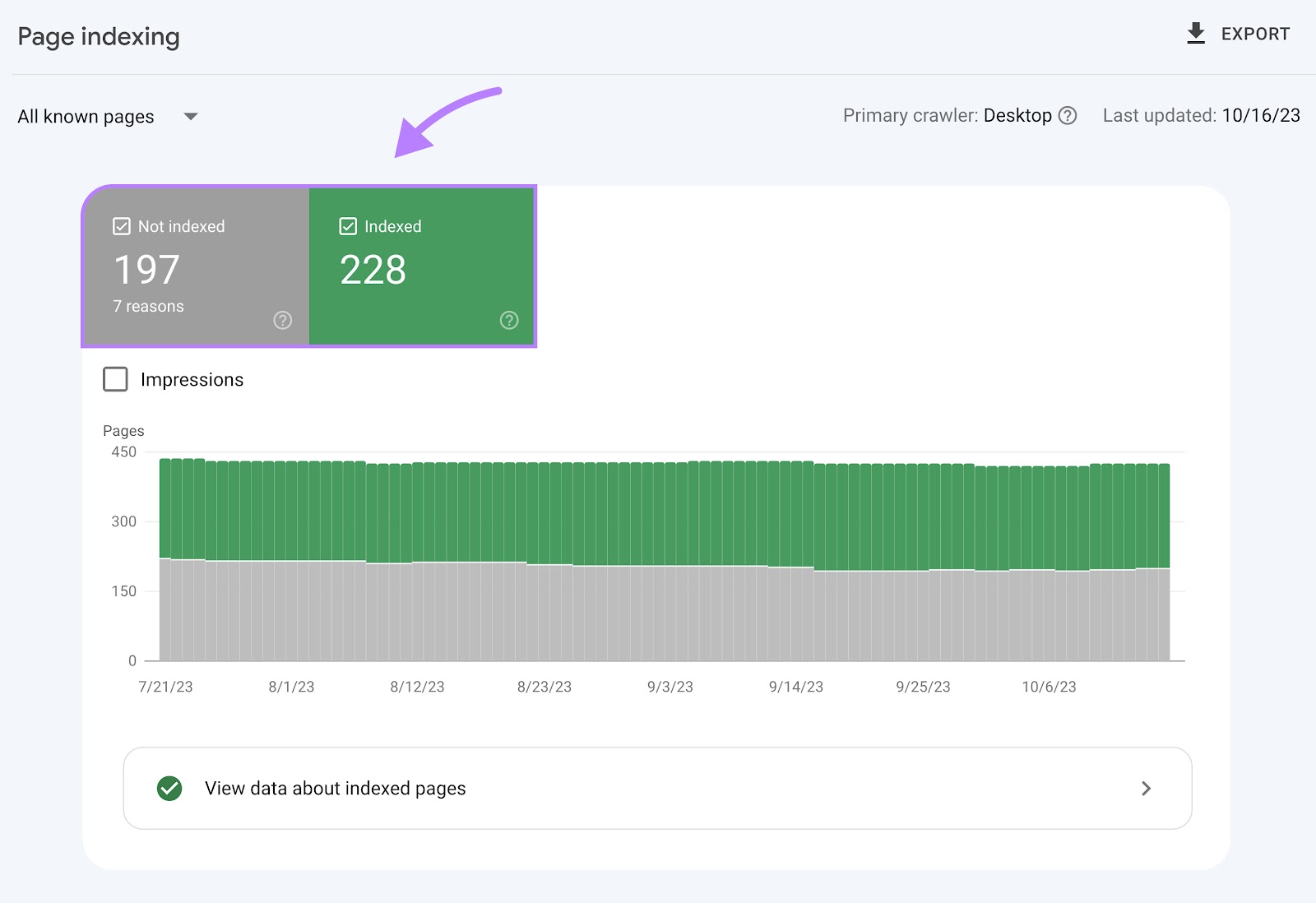

You’ll see a chart that tells you how many pages are indexed. And how many pages aren’t.

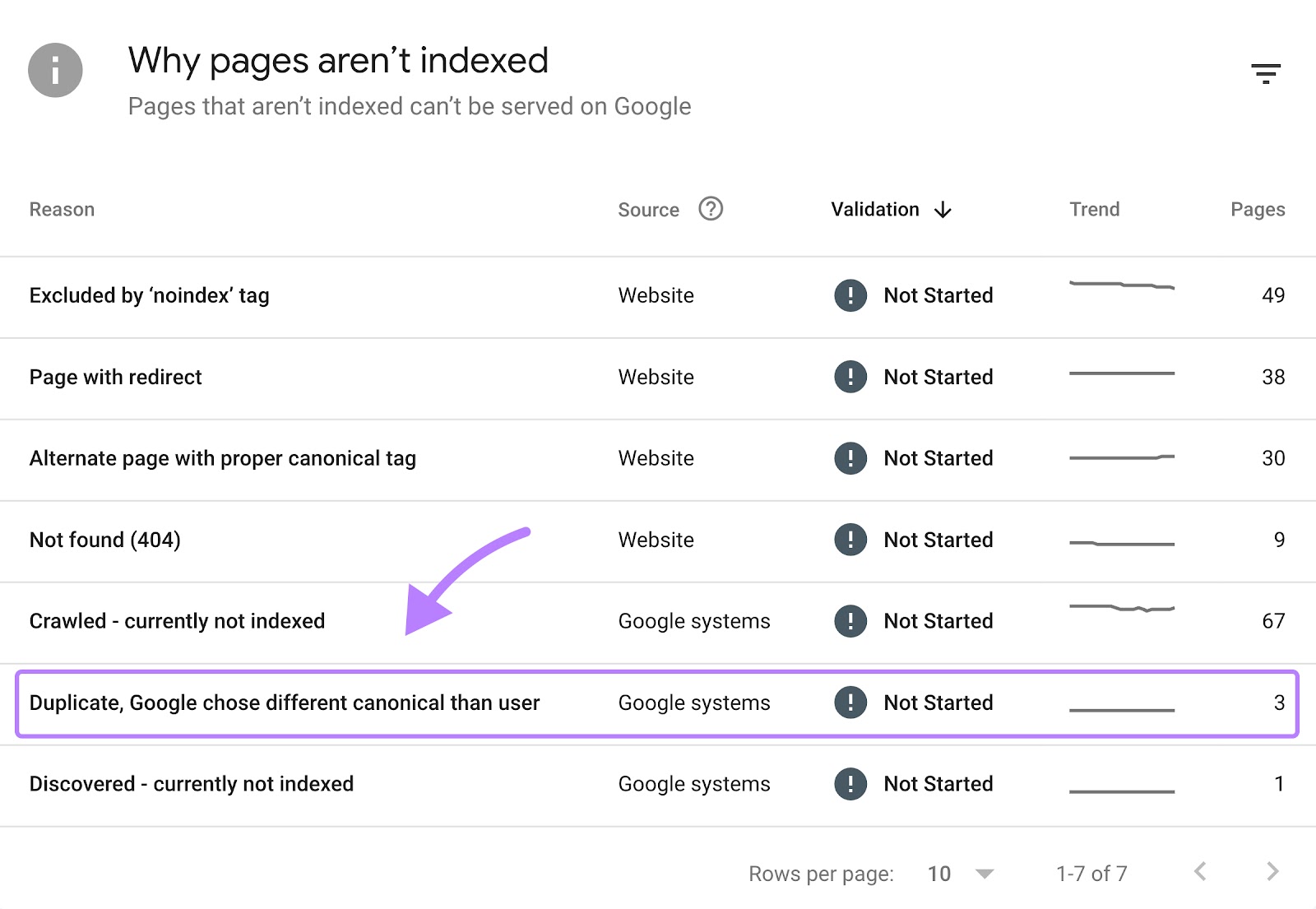

Scroll down to see the reasons why your pages weren’t indexed.

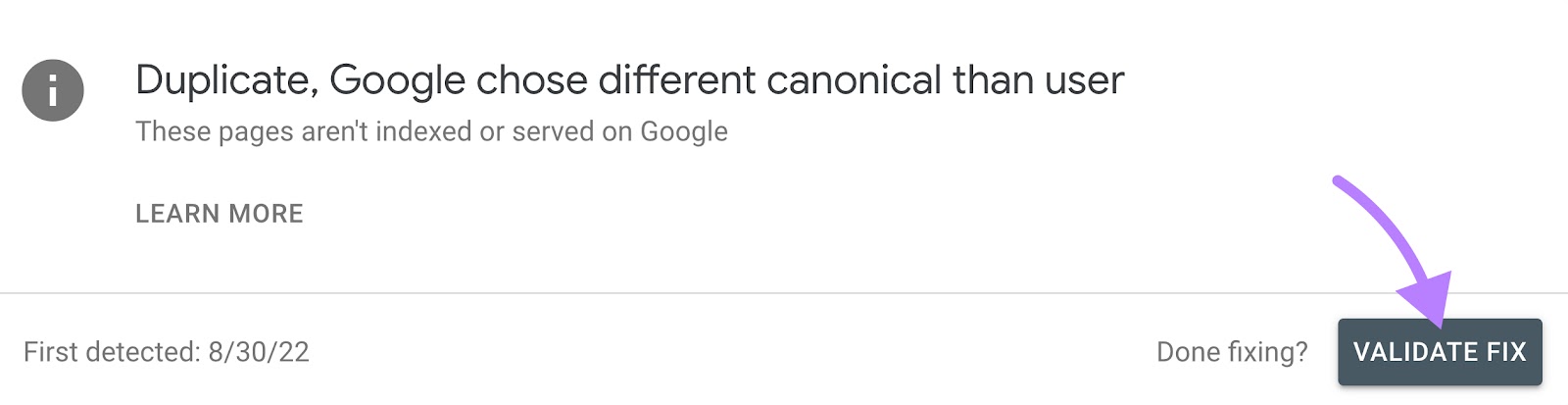

To get a list of your duplicate pages, click on the “Duplicate, Google chose different canonical than user” error if you have it.

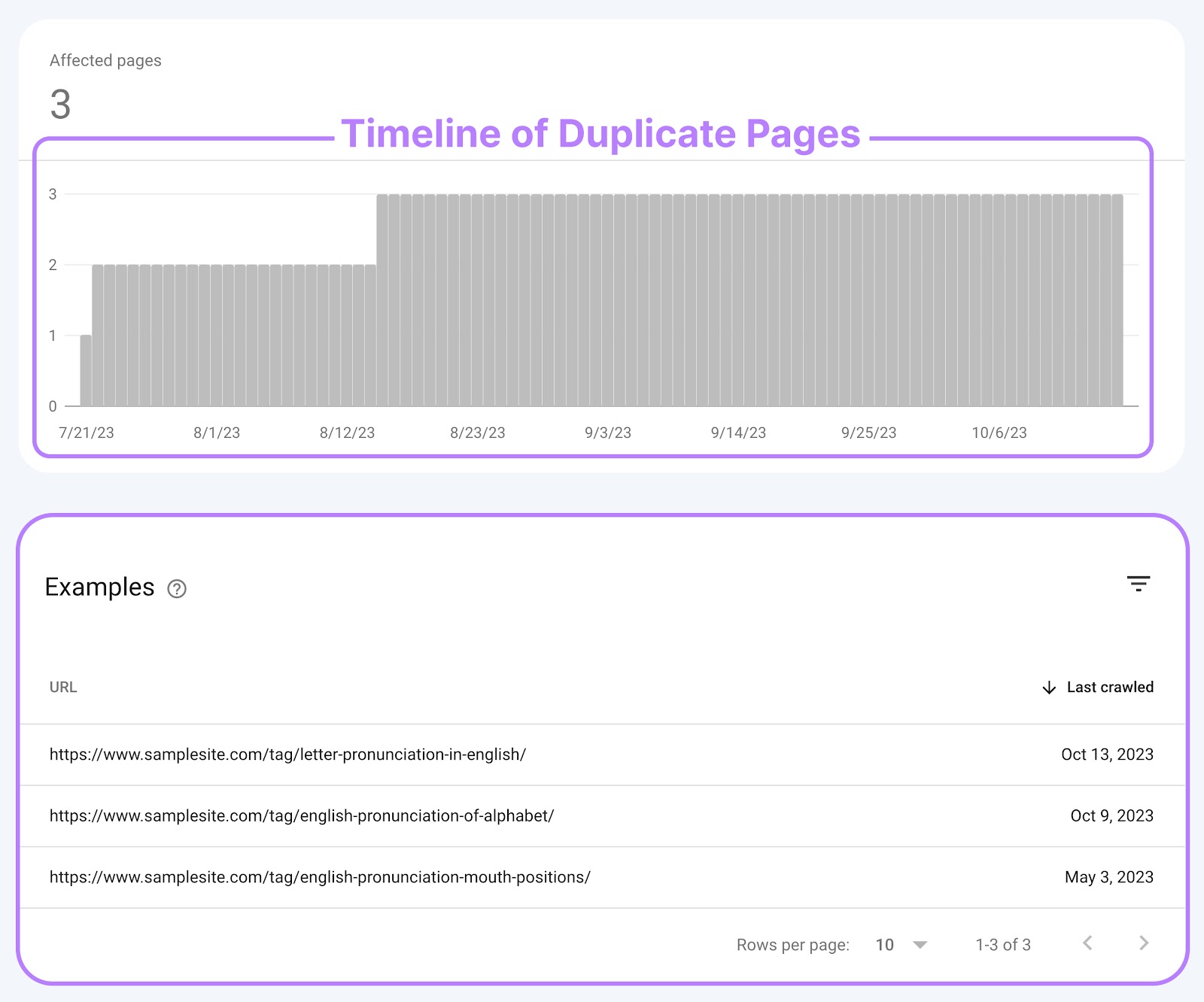

Doing this will open a report that shows you a chart of how many affected pages you’ve had over time. And a list of pages with duplicates.

You can fix the issue using one of the methods we state below. And click “Validate Fix” to prompt Google to check your site.

How to Fix Duplicate Content Issues

Now, it’s time to go over what you can do to avoid problems related to duplicate content. Or remedy current issues.

Here are two methods you can use:

Implement Canonical Tags

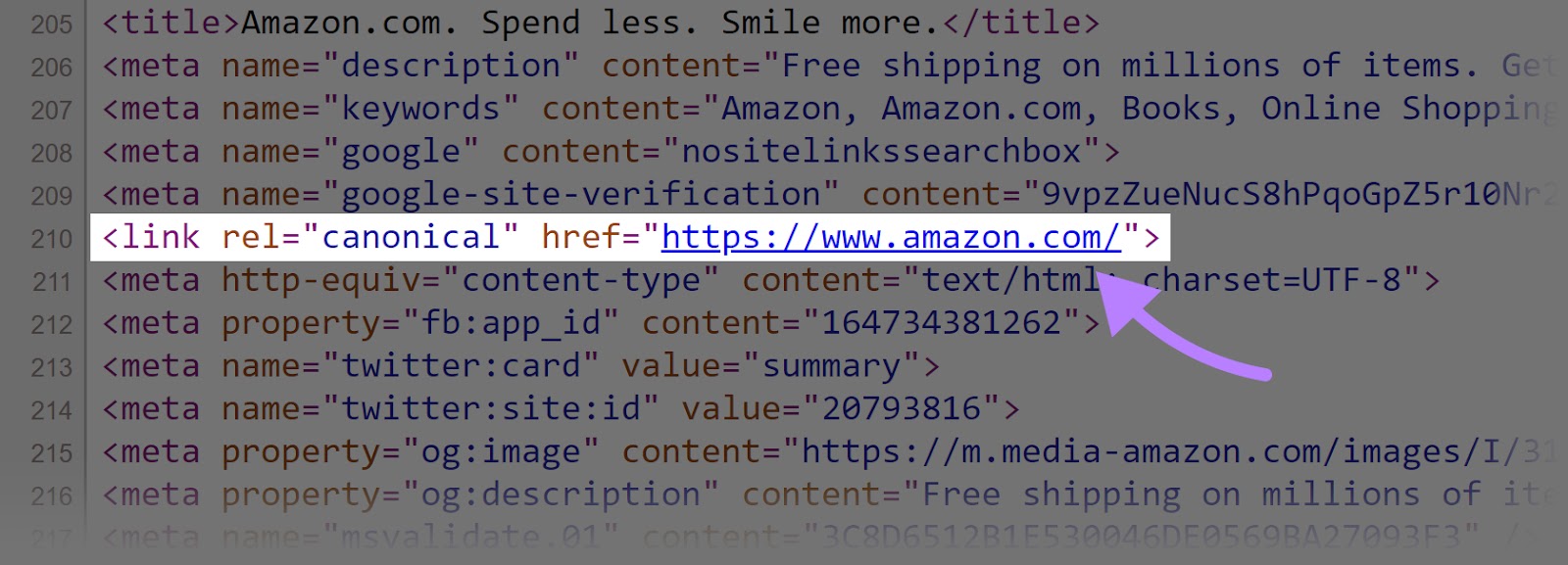

Canonical tags (also called rel=”canonical” tags) are snippets of HTML code that specify the preferred URL for duplicate or highly similar content.

A canonical tag tells search engines which version of your page you want them to index and display in search results.

You can find the tag in the <head> section of a website’s HTML code. Here’s an example of what it looks like:

Self-referential canonical tags (meaning tags on a page that point to itself) can also protect your content from scrapers. That’s because it tells search engines that the page they’re on is the original, authoritative source.

If scrapers copy your content and don’t include this tag correctly, search engines are more likely to recognize your page as the original.

Adding a canonical tag to your page will differ based on what content management system you’re using—WordPress, Webflow, etc.

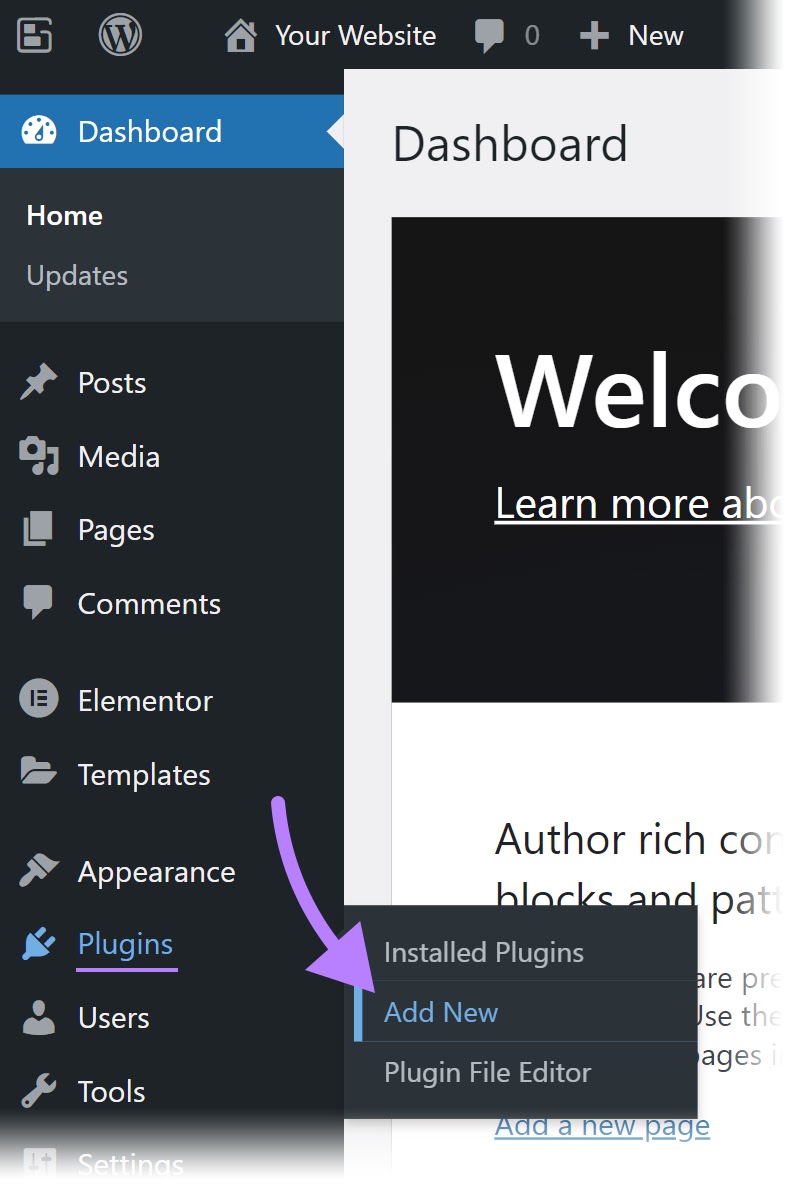

The easiest way to do it in WordPress is with the Yoast SEO plugin.

First, sign into your WordPress account.

Then, add Yoast SEO to your WordPress site by clicking on “Plugins” > “Add New” in the left-hand menu.

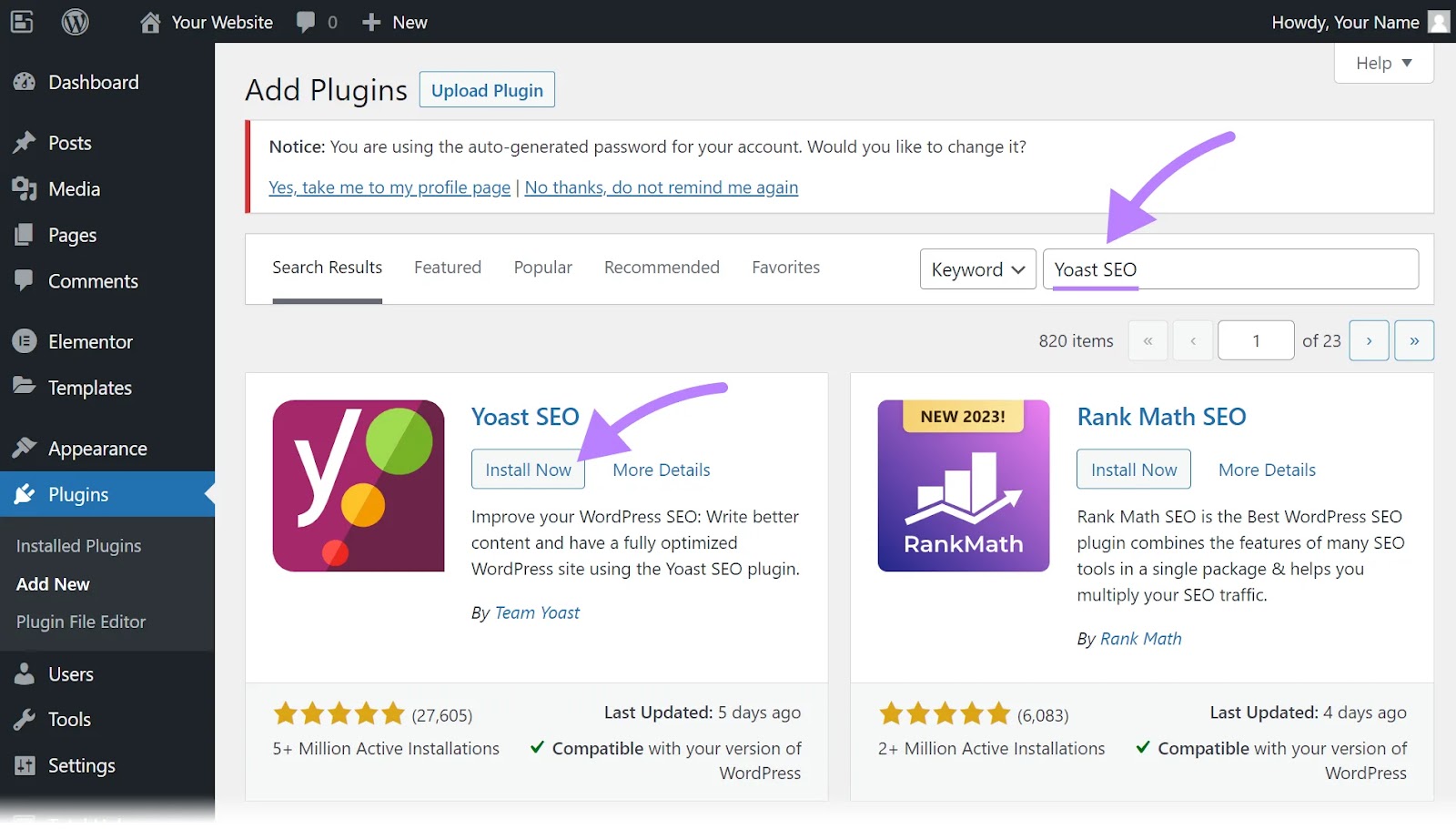

Type “Yoast SEO” in the search bar. Then, find the plugin and click “Install Now.”

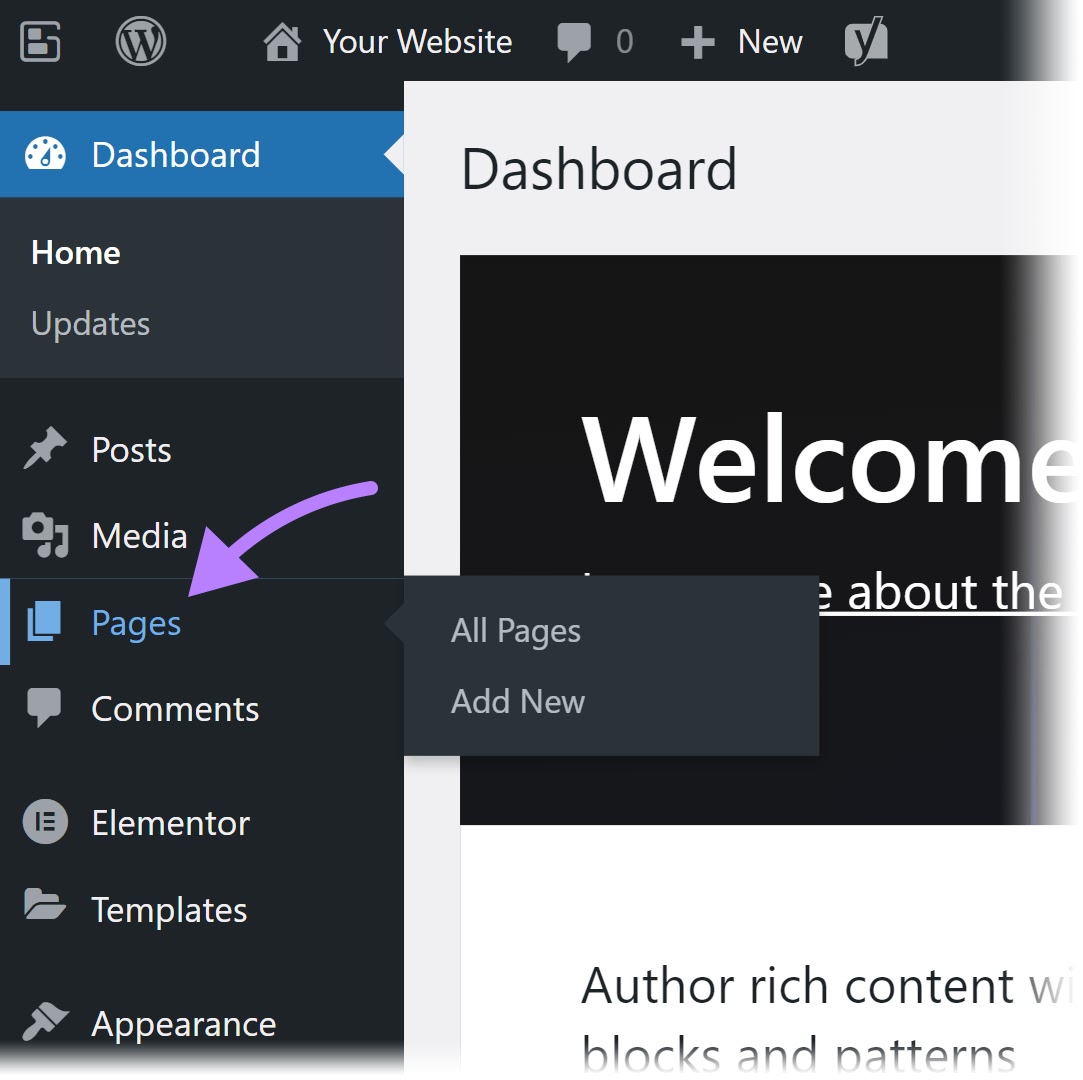

After installing the plugin and setting it up, click on “Pages” in the sidebar and navigate to one of your duplicate pages.

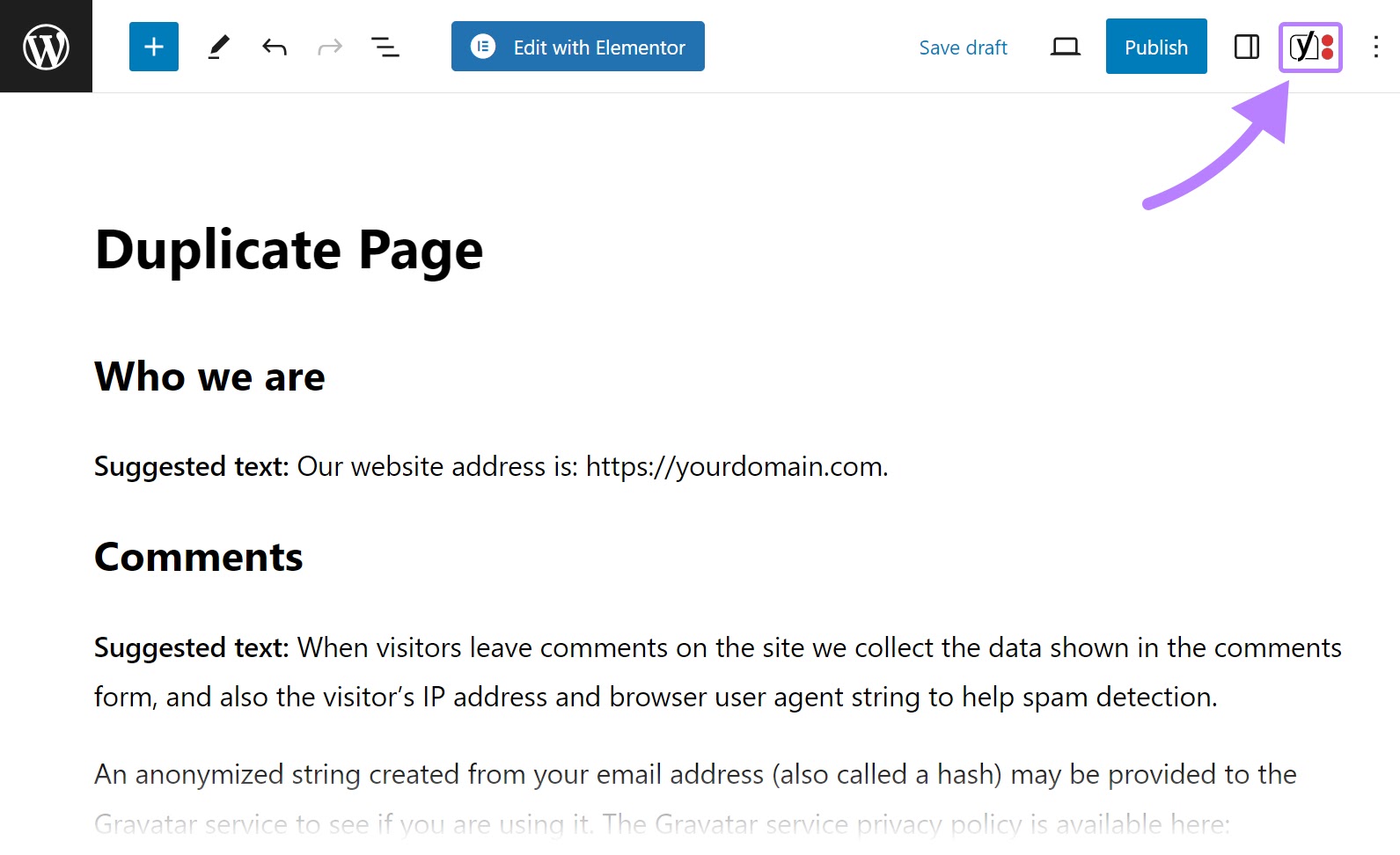

Then, open the Yoast SEO sidebar by clicking on the Yoast SEO logo found at the top right corner of your screen.

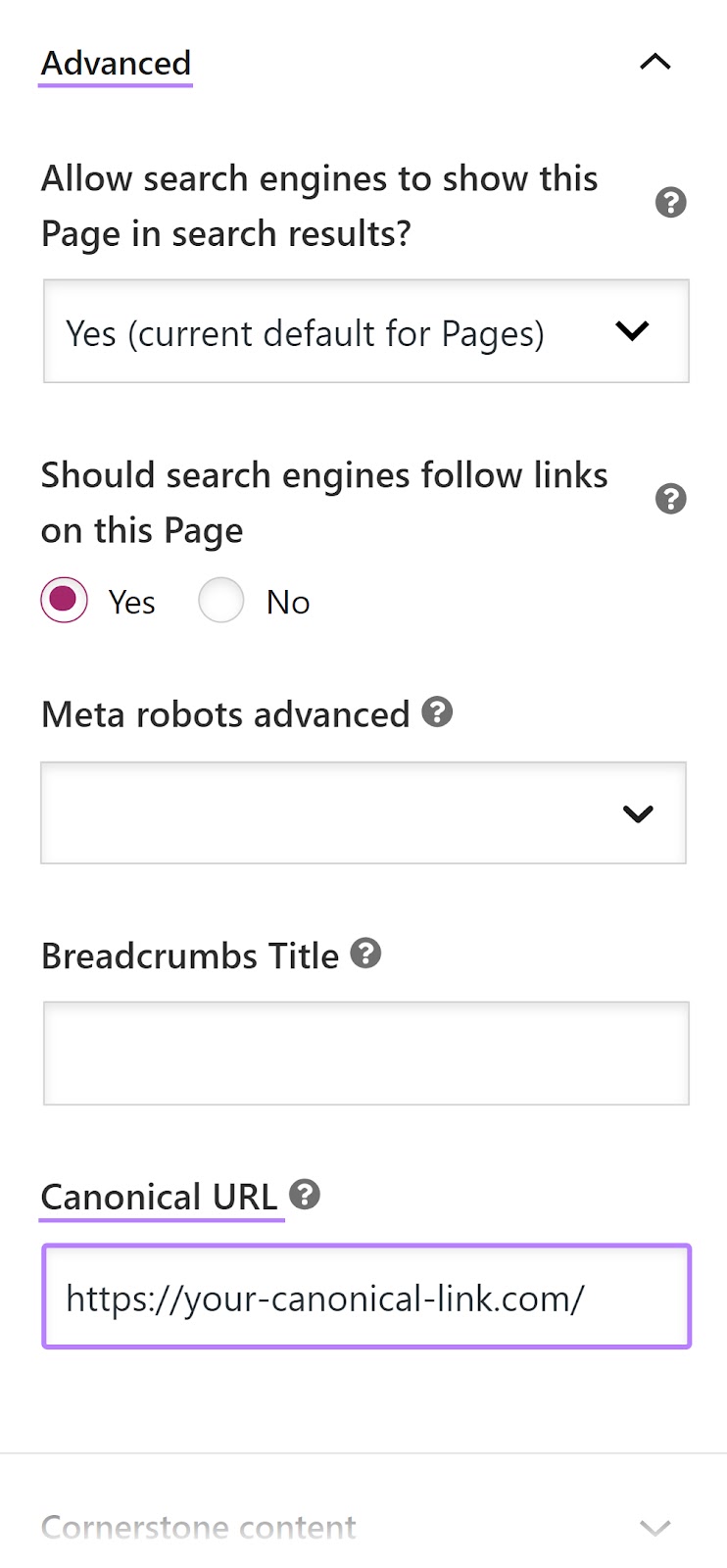

Scroll through the sidebar until you see “Advanced.” Click it to unfurl and enter the canonical link in the space under “Canonical URL.”

If the page is a duplicate, then add the URL of the page that you want Google to index into the space. If you’re on the page that you want indexed, then enter that page’s URL to create a self-referencing canonical tag.

Once you’ve inserted the canonical tag, Semrush’s Site Audit to test your implementation. And see if the number of duplicate pages has decreased.

Further reading:

Implement 301 Redirects When Needed

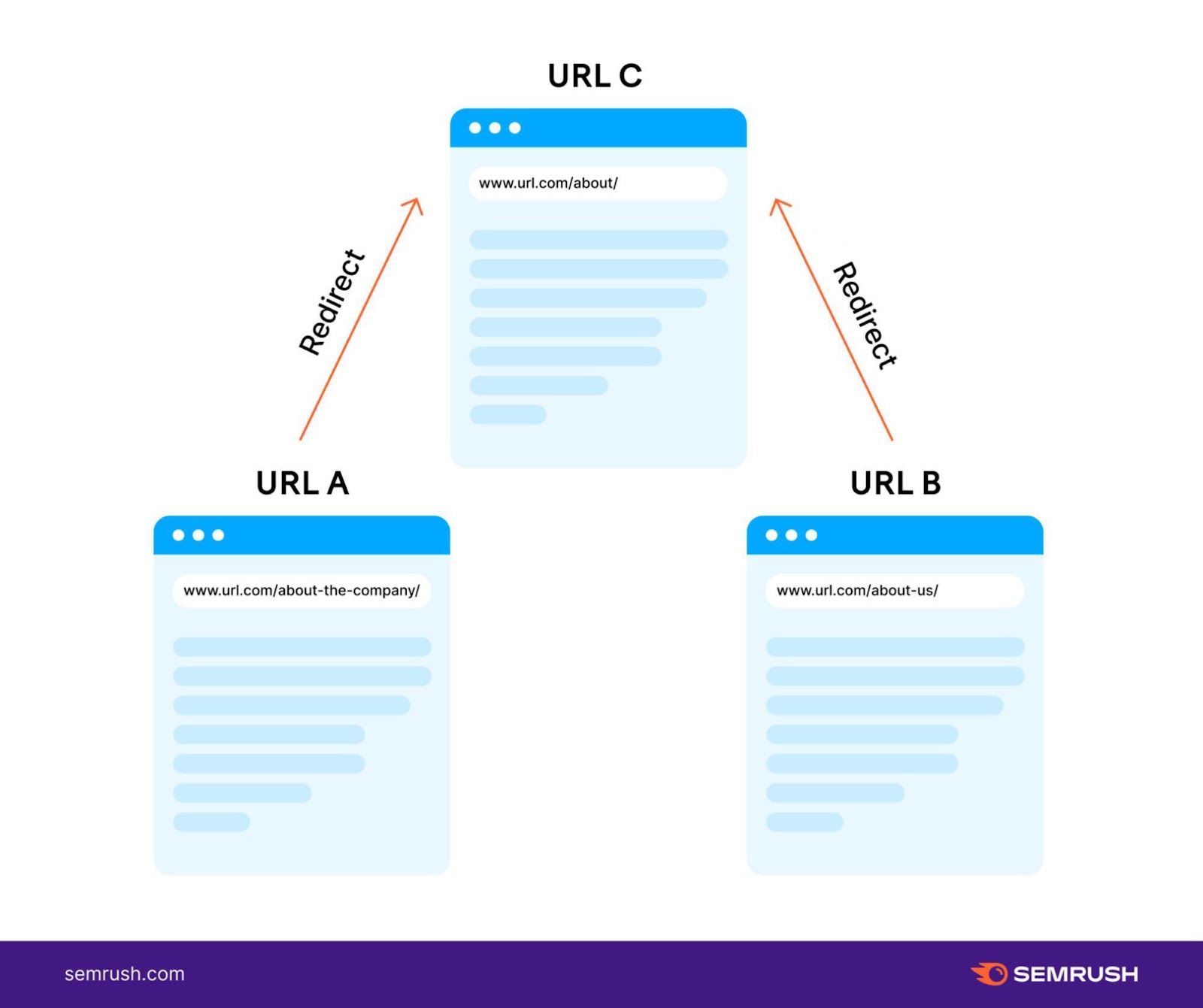

A 301 redirect permanently redirects users and search engines from one URL to another. This method is best for duplicates you don’t need to keep (like after you’ve switched from HTTP to HTTPS or when you’ve moved a page to a new URL).

Let’s say you’ve changed your about page’s URL from “www.url.com/about-the-company” to “https://url.com/about.”

You’ll want to redirect the old URL to your new URL. To ensure users and search engines end up on the correct page.

Some hosting companies will automatically implement a 301 redirect when you change a page’s URL. But the exact steps to implementing a 301 redirect depend on your server and the content management system (CMS) you use.

For detailed instructions, check out our guide to 301 redirects.

Monitor and Audit Your Content with Semrush

Duplicate content can have a negative impact on SEO. It can lower your ranking potential and hurt your website’s crawlability.

But there are ways to avoid duplicate content issues. And solve problems before they start to impact your website’s performance.

Use Semrush’s Site Audit tool to regularly monitor your site’s health. And quickly see if you have any issues with duplicate content across your website.